Compiling a Neural Net to C for a 1,744× speedup

Recent searches

Search options

#neural

1 post1 participant0 posts today

slightknack.devCompiling a Neural Net to C for a 1,744× speedup — Isaac ClaytonA cozy little corner of the web.

#Zoomposium with Dr. #Gabriele #Scheler: “The #language of the #brain - or how #AI can learn from #biological #language #models”

There is a #paradigmshift away from the purely information-technological-mechanistic, purely data-driven #Big #Data concept of #LLMs towards increasingly information-biological-polycontextural, structure-driven #artificial, #neural #networks (#KNN) concepts.

More at: https://philosophies.de/index.php/2024/11/18/sprache-des-gehirns/

Spiking Neural Networks: Brain-Inspired Chips That Could Keep Your Data Safe Neuromorphic computi...

https://www.securityweek.com/spiking-neural-networks-brain-inspired-chips-that-could-keep-your-data-safe/

#Artificial #Intelligence #data #protection #privacy #Spiking #Neural #Networks

Result Details

https://www.securityweek.com/spiking-neural-networks-brain-inspired-chips-that-could-keep-your-data-safe/

#Artificial #Intelligence #data #protection #privacy #Spiking #Neural #Networks

Result Details

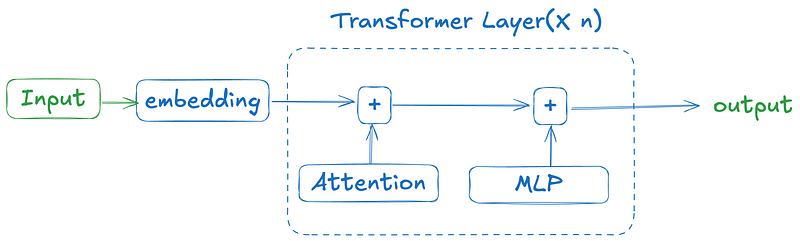

Transformer neural net learns to run Conway's Game of Life just from examples

sidsite · Transformer neural net learns to run Conway’s Game of Life just from examplesThe site of Sid

Modern Deep Convolutional Neural Networks with PyTorch Image Recognition with Convolutional Neura...

https://studybullet.com/course/modern-deep-convolutional-neural-networks-with-pytorch-2/

#100% #Free #Courses #StudyBullet-20 #Convolutional #Neural #Networks #Deep #Learning #Free #Courses

Result Details

https://studybullet.com/course/modern-deep-convolutional-neural-networks-with-pytorch-2/

#100% #Free #Courses #StudyBullet-20 #Convolutional #Neural #Networks #Deep #Learning #Free #Courses

Result Details

Recurrent Networks Hello World in Clojure with new Deep Diamond RNN support on CPU and GPU I'...

http://dragan.rocks/articles/22/Recurrent-networks-hello-world-sequence-prediction-in-Clojure-with-new-Deep-Diamond

#Clojure, #RNN, #Deep #Diamond, #Learning, #Recurrent #Neural #Networks

Event Attributes

http://dragan.rocks/articles/22/Recurrent-networks-hello-world-sequence-prediction-in-Clojure-with-new-Deep-Diamond

#Clojure, #RNN, #Deep #Diamond, #Learning, #Recurrent #Neural #Networks

Event Attributes

dragan.rocksRecurrent Networks Hello World in Clojure with new Deep Diamond RNN support on CPU and GPUThe support for Recurrent Neural Networks has just landed in Deep Diamond. Let's walk through a complete Hello World example!

Chonky – a neural approach for text semantic chunking

Circuit Tracing: A Step Closer to Understanding Large Language Models Reverse-engineering large ...

https://towardsdatascience.com/circuit-tracing-a-step-closer-to-understanding-large-language-models/

#Machine #Learning #AI #Interpretability #Llm #Neural #Network #Transformer

Event Attributes

https://towardsdatascience.com/circuit-tracing-a-step-closer-to-understanding-large-language-models/

#Machine #Learning #AI #Interpretability #Llm #Neural #Network #Transformer

Event Attributes

Neural Graffiti – Liquid Memory Layer for LLMs

(2016) Interactive Neural Network Art

otoro.netInteractive Neural Network Artōtoro.net

A legacy of industrial technology excellence: UTSI International turns 40 As UTSI International c...

https://houston.innovationmap.com/utsi-international-at-40-2671265116.html

#Api #compliance #Artificial #neural #network #Control #networks #Control #room #management #Cybersecurity

Event Attributes

https://houston.innovationmap.com/utsi-international-at-40-2671265116.html

#Api #compliance #Artificial #neural #network #Control #networks #Control #room #management #Cybersecurity

Event Attributes

A legacy of industrial technology excellence: UTSI International turns 40 As UTSI International c...

https://houston.innovationmap.com/utsi-international-at-40-2671265116.html

#Api #compliance #Artificial #neural #network #Control #networks #Control #room #management #Cybersecurity

Event Attributes

https://houston.innovationmap.com/utsi-international-at-40-2671265116.html

#Api #compliance #Artificial #neural #network #Control #networks #Control #room #management #Cybersecurity

Event Attributes

A legacy of industrial technology excellence: UTSI International turns 40 As UTSI International c...

https://houston.innovationmap.com/utsi-international-at-40-2671265116.html

#Api #compliance #Artificial #neural #network #Control #networks #Control #room #management #Cybersecurity

Event Attributes

https://houston.innovationmap.com/utsi-international-at-40-2671265116.html

#Api #compliance #Artificial #neural #network #Control #networks #Control #room #management #Cybersecurity

Event Attributes